The Power of Hyperscale Computing

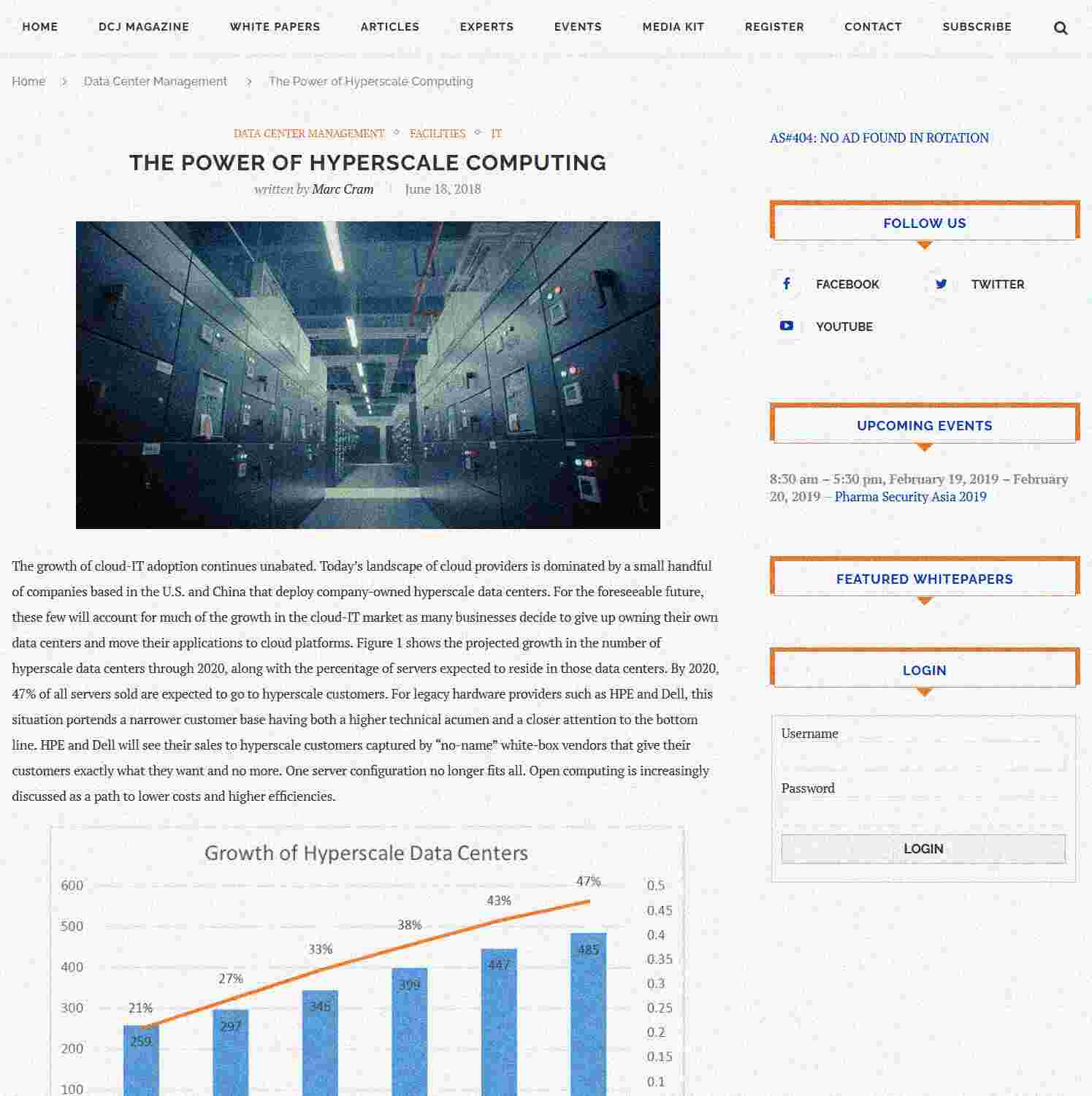

Originally written in June 2018, this article put the spotlight on hyperscale computing and how it was expected to grow in the coming years. It also highlighted key hyperscalers like Microsoft, Amazon, Facebook, Google and Alibaba. Attention was also given to the impact of power density and cooling on hyperscale design and how to address those.

Did you know...

Florida has four facilities in it from Equinix, making Equinix Florida's biggest provider. Out of Florida's 53 data centers, 28 are carrier neutral, and 25 are non-neutral. If you are looking for remote hands services, 32 of Florida's facilities have them, 26 have offices to rent, and 34 have cabinets for your server racks.